Yet Another Farmers Market Dashboard … or Why PDFs Are Still a PITA

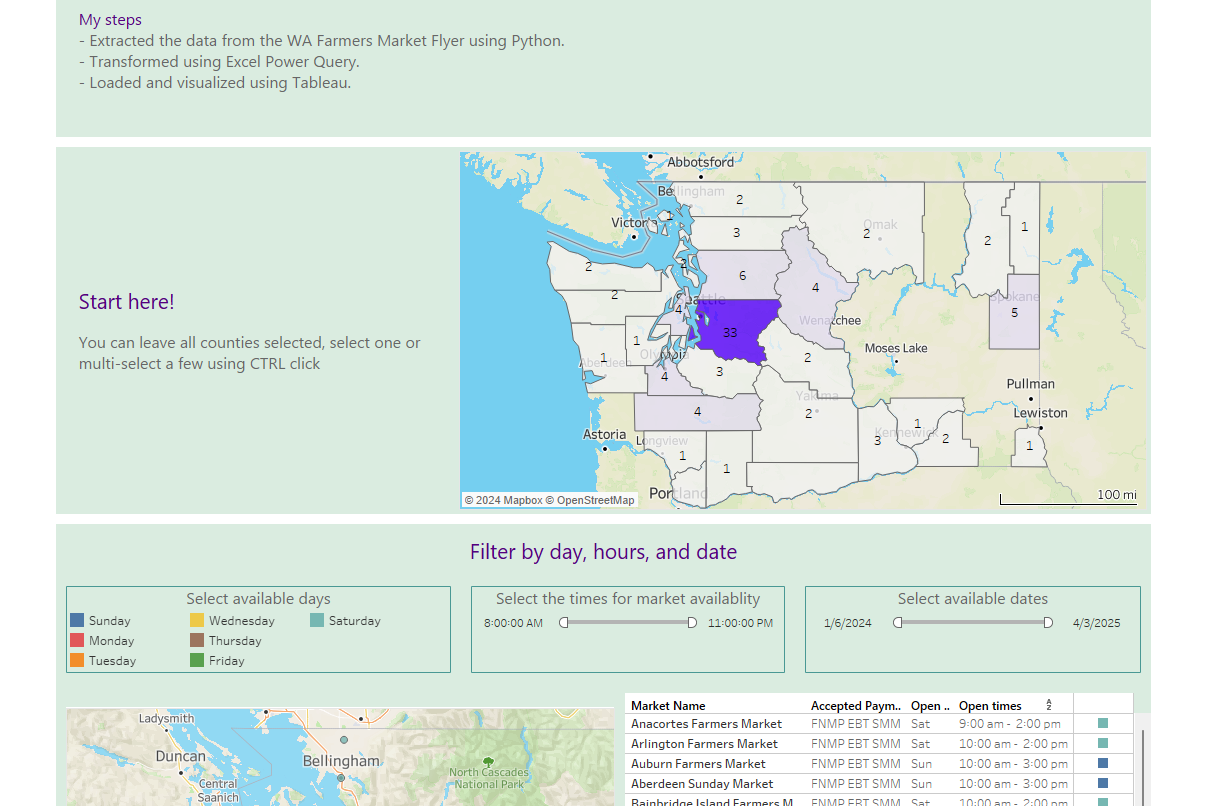

I thought it would be nice to get away from the Seattle Police Department dataset for a bit and focus on something fun, so I started imagining a Washington State Farmers Market dashboard for myself.

Dataset

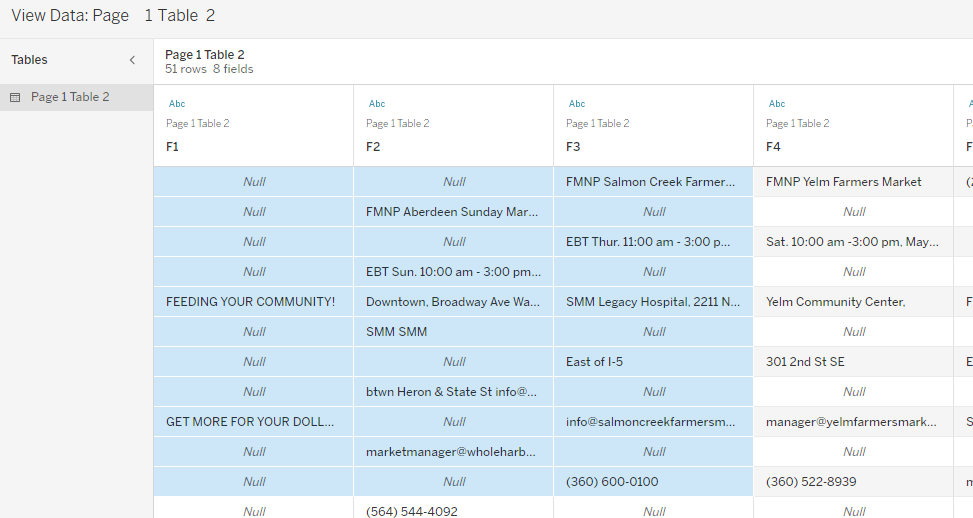

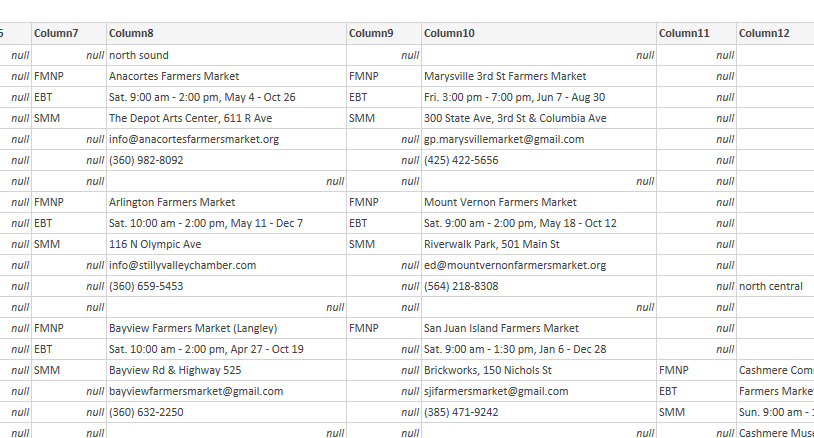

Washington State Farmers Markets Association has a their dashboard and PDF flyer. I considered creating a robot to scrape the data. Then I examined the flyer. You can see there’s payment info next to the market info, for each one, which seemed like a simple enough pattern.

Every PDF extraction tool I’ve used never seems to import the data in the format I need, but the Python libraries – pdfminer3 is my favorite – are always reliable. I contacted the organization for the dataset, thinking that by the time I found the correct contact or got a reply, I could have extracted 80% of what I needed using Python. So, I went ahead and started coding and planned to look at how Power BI, Tableau, and ChatGPT handle this later.

The dataset is extracted from the PDF flyer.

The Dashboard

Why PDF Parsing is Still a Pain

PDF parsing remains a challenge because it's not a file format that guarantees text or information in the expected order. According to Tim Allison who wrote a white paper on the Brief Overview of the Portable Document Format and Some Challenges for Text Extraction,

…text is stored as a run with a coordinate on the page as a start point and then character codes. There is no logical connection between the runs, and there is no requirement that a single run contain a meaningful unit (e.g., word, sentence, paragraph) or that text is rendered in reading order.

This means that I totally lucked out on the fact that:

Accepted types of payment information (FNMP, EBT, SMM) happened to always be contained within a text box.

The farmers market details always happened to be contained within the same text box.

However, if I wanted to group by region, finding everything under the region Olympic Peninsula wasn’t happening because “Olympic” was broken up . Most of the other regions in that PDF were fine. Breaking this up by region wasn’t needed and wasn’t worth it.

Let’s go over some tools in the order I evaluated them:

PDFMiner

PDFMiner3 is reliable and consistent. You load up the PDF, get all the text containers, their positions on the page, and the text within. Write your favorite algorithm to evaluate containers and spit out a CSV file for further cleaning in a tool like Excel Power Query.

Adobe

Adobe provides some Python libraries that require an API key. As Tim Allison mentioned in the same white paper, it uses a machine learning algorithm to pull and group text from the document. Looking at a couple of extraction libraries, I got results like:

"Page": 0,

"Path": "//Document/Sect[6]/P[6]",

"Text": "FMNP West Seattle Farmers Market EBT Sun. 10:00 am -2:00 pm, Jan 7 - Dec 29 SMM California Ave SW and SW Alaska St ",

"TextSize": 7.0

This is pretty good. The accepted payment types (FNMP, EBT, SMM) are intermingled with the market info and can be extracted separately but with pdfminer the text in each box is kept in it’s original format and easier to parse.

Tableau PDF Tools

Nope.

ChatGPT

ChatGPT is full of it hallucinating.

Me: Could you tell me how many farmers markets are listed in the PDF?

Me: I meant PDF.

ChatGPT: No problem! Please upload the PDF file, and I'll check the number of farmers markets listed in it.

Me: I upload the flyer.

ChatGPT: The PDF file lists 105 farmers markets.

I was impressed, but the Python code had:

# Count the occurrence of the phrase "Farmers Market" farmers_market_count += text.lower().count("farmers market")Which I know doesn’t work. So what’s going on here?

Me: Show me the list.

ChatGPT: Here is the list of farmers markets extracted from the PDF:

Blah blah blah – I get 25 and ask for the full 105 it told me about.

• Snohomish Farmers Market

• Stanwood Farmers Market

• Fairwood Farmers Market (duplicated name)

• Hillyard Farmers Market (duplicated name)

• Millwood Farmers Market (duplicated name)

Note: Some markets appear multiple times in the document, leading to duplication in the list. The total unique entries might be fewer than 105 if we remove duplicatesThere are no duplicate entries. I counted.

I’m wondering how it got to a correct count of 105. It seems it could recognize the entries but couldn’t figure out how to find the rest. I’m not sure. It's good to know that eventually it will get there, but not today.

Power BI

Did a great job of keeping the payment data with the market data but for now I’m sticking with Python data extraction.

A Few Other ETL Tasks

Data Cleaning:

If you look at Figure 3 , you could probably search for everything with “Farmers Market” and hope for the best. However, variations like “Sunday Market” and Tenino’s typo "Famers Market" were interesting. The last one made me laugh as it took me the longest to find.

Data Transformation – Power Query in Excel:

My love for this feature grew immensely. The amount of code that I did not have to write to slice up the data into the correct columns saved me so much time. It’s nice to get to a point where you’re happy with your data cleaning and know that Excel can take care of the rest.

Data Loading in Tableau and Google Geocodes:

Loading the data into Tableau from Excel is fairly straightforward, but I needed more geodata than what was provided on the PDF flyer. Google provides geocodes (longitude and latitude) given an address. Their free usage tier was enough, and once you’re happy with the updated addresses and additional city and regional data for all the markets, you’re done. I used a Python script to pull all the geocodes from my extracted data for each market and left it in a CSV file to join later.

Conclusion

PDF Tools: Better the devil you know than the one you don’t. I knew immediately looking at the PDF it wouldn’t take long to extract using libraries available via Python but there’s some great improvements that need to be made in this space for other tools.

Power Query Transformation are efficient. You could do it in Python, but the having Excel (or Power BI) convert your transformation steps into code, that you can resuse over different data as you experiment is far better and more reliable than writing the code yourself.

I am not a designer. Making dashboards that are pretty to look at is a skill I don’t have yet. I’ll stick with the data parts for now.